In a paper published in Physical Review Letters, with title

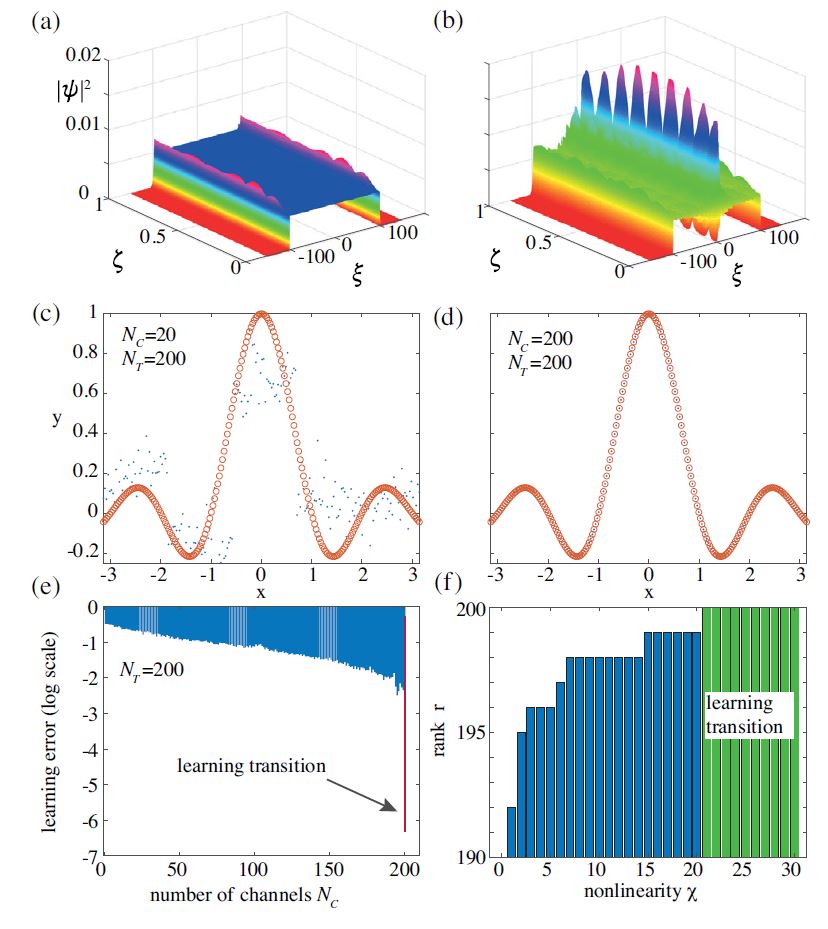

we study artificial neural networks with nonlinear waves as a computing reservoir. We discuss universality and the conditions to learn a dataset in terms of output channels and nonlinearity. A feed-forward three-layered model, with an encoding input layer, a wave layer, and a decoding readout, behaves as a conventional neural network in approximating mathematical functions, real-world datasets, and universal Boolean gates.

The rank of the transmission matrix has a fundamental role in assessing the learning abilities of the wave.

For a given set of training points, a threshold nonlinearity for universal interpolation exists. When considering the nonlinear Schrödinger equation, the use of highly nonlinear regimes implies that solitons, rogue, and shock waves do have a leading role in training and computing. Our results may enable the realization of novel machine learning devices by using diverse physical systems, as nonlinear optics, hydrodynamics, polaritonics, and Bose-Einstein condensates. The application of these concepts to photonics opens the way to

a large class of accelerators and new computational paradigms. In complex wave systems, as multimodal fibers, integrated optical circuits, random, topological devices, and metasurfaces, nonlinear waves can be employed to perform computation and solve complex combinatorial optimization.

The paper was selected as Editors’Suggestion and Featured in Physics

See also